It’s not every day that you log on to Twitter and see a racist tweet from a known troll popping up in your normally social justice-infused stream, but that’s exactly what happened to activists and followers of a select few feminist sites earlier this week. Notorious troll Andrew Auernheimer took advantage of Twitter’s self-service ad platform to embed a promoted tweet with a white power message, and he went on to boast about how easy it was. Auernheimer even offered to do it again, if his followers helped him out with some Bitcoin.

“Whites need to stand up for one another and defend ourselves from violence and discrimination,” the tweet informed users, a message that was later taken down by Twitter. It was targeted at anti-racist and social justice-oriented Twitter users, reflecting certain flaws in the implementation of the algorithm designed to catch questionable promoted tweets. Twitter has a potentially serious problem on its hand, as Auernheimer, also known as “weev,” isn’t the first and likely won’t be the last to pull this trick.

“Someone had paid to ensure that this message would show up in users’ feeds,” wrote Jacob Brogan at Salon, illustrating the problem with policing promoted tweets in any meaningful way. “And that meant Twitter was making money from its presence.”

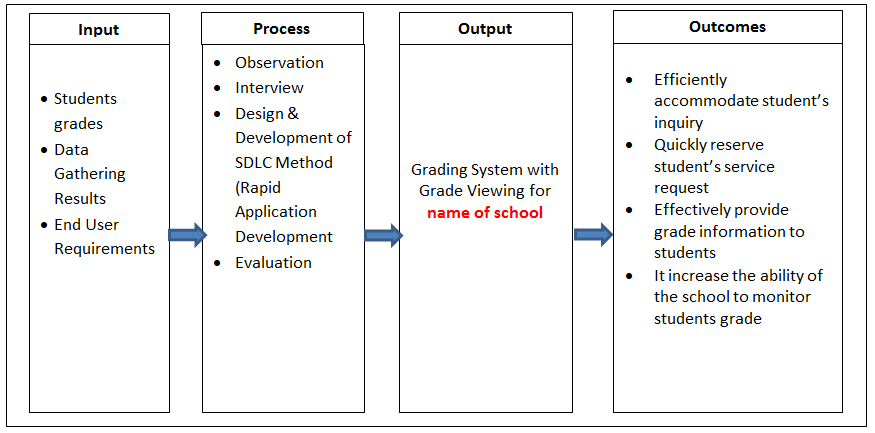

One feature of targeted advertising allows users to specifically serve ads to followers of other users. This allows organizations to reach people who are most likely to be interested in their products and services; for example, a manufacturer of cleaning products might want to target people who follow high-profile cleaning and organizing Twitter accounts, including individuals and magazines.Advertisers can select target dates and then bid for a maximum budget, ensuring they don’t get stuck with a high advertising bill for promoted tweets that get numerous impressions. This combination of features allowed weev to send a racist missive exploding across the timelines of those most likely to be upset about it, and it cost him virtually nothing.

Twitter has a potentially serious problem on its hand.

He wasn’t the first who’s used Twitter ads as a tool to lash out at activists, or simply to troll. In 2013, the Abortion Gang, a popular reproductive justice website, highlighted a racist, anti-choice tweet sponsored by CNSNews, a notoriously conservative Christian news outlet. Another promoted tweet in 2013 was aimed at gamers—and managed to be both misogynistic and not safe for work.

Meanwhile, during the protests in Ferguson, Mo., a number of corporate advertisers made fools of themselves with timed ads that weren’t suspended during the protests. Users were surprised and often furious to see corporate advertising sandwiched between tweets about racial justice, police violence, and the situation on the ground; much of the reporting at the protests came not from the reporters locked into a media pen, but from activists and citizen journalists. In some cases, the language and framing of the ads was particularly unfortunate, as in the case of a promoted tweet saying “War is coming!” as part of a campaign for Game of War—Fire Age.

In theory, Twitter does have a policy regarding content in promoted tweets, and it does use an algorithm to flag and suspend content that violates its terms of service. It bars hate speech as well as “inflammatory content”—like tweets that promote self-harm. In addition, the site at times suspends specific advertising in response to breaking news events, picking up on key phrases that might be inappropriate. You likely don’t want to be advertising a disaster survival game, for example, when the news is riveted by news of a major earthquake in Nepal.

Building effective algorithms, however, is very challenging. It’s extremely difficult to catch every single instance of a potentially hateful, inflammatory, or harmful sponsored tweet, because computers are not always as smart as human beings, and they are particularly bad at linguistic nuance.

You likely don’t want to be advertising a disaster survival game when the news is riveted by news of a major earthquake in Nepal.

One obvious issue for algorithms associated with self-service advertising is the fact that they may miss dogwhistles along with keywords and phrases used in provocative ways. “Chink,” for example, can be a word used to describe a nook or cranny—but it can also be a racial slur. Organizations and individuals trying to produce material that violates the terms of service can learn which phrases and words trigger the algorithm and work around them.

Weev’s tweet was a classic example, as none of the individual words in the tweet were offensive, making it impossible for an algorithm to catch. He was too smart to use racial slurs, knowing that the ad might not have been accepted. Like other trolls, he could also have opted for flooding, hoping to overwhelm users with a barrage of sponsored ads in the hopes that some would slip through the algorithm and others might not be immediately flagged and reported as abusive.

The problems with Twitter’s algorithm were brought home in November 2014, when Women, Action, and the Media partnered with Twitter on an unprecedented experiment: allowing feminists to participate in manual evaluation of flagged tweets. Users could submit abuse reports both to Twitter and WAM, which would determine if they merited immediate action. The feminist organization looked at nearly 700 tweets over the course of the collaboration.

“That partnership,” wrote Robinson Meyer at the Atlantic, “has been widely greeted as a step forward, a sign Twitter is finally taking harassment seriously. To my eye, though, it just seems like another stopgap—and further evidence Twitter isn't yet willing to invest to protect its most vulnerable users.”

Similar concerns arise with the implementation of any algorithm. Twitter may argue that it’s functionally impossible to accept ads in volume—and at profit—if it manually reviews every single submission. If that’s the case, however, it needs to back its advertising with a much more robust flagging algorithm to filter promoted tweets like weev’s out of the system, as his comments never should have made it out into the wild.

He was too smart to use racial slurs, knowing that the ad might not have been accepted.

Twitter may want to consider an algorithm that evaluates users themselves before looking at their ads. As a well-known troll, for example, weev is blocked by a large number of people, a likely sign that any promoted tweets he produces are likely to be offensive. In addition, the firm needs to be working with outside consultants who have experience in areas that internal personnel do not, such as experts on racial dog-whistles and transphobia.

Without these kinds of commitments to user safety, Twitter is only reiterating the message that it cares about the bottom line more than its users. The site can already be an extremely hostile place for minorities, and Twitter has done little to address the problem, even in the face of sustained harassment, doxing, bullying, and abuse of users. If Twitter can’t clean up its act, users may opt to find social media that is more interested in protecting their safety and interests.

S.E. Smith is a writer, editor, and agitator with numerous publication credits, including the Guardian, AlterNet, and Salon, along with several anthologies. Smith also serves as the Social Justice Editor for xoJane and will be co-chairing Wiscon 40—the preeminent feminist science-fiction conference—in 2016.

Photo via mozzercork/Flickr (CC BY 2.0)